Artificial Intelligence, zBlog

Future of Autonomous Vehicle Technology – Vision Vs Sensors

trantorindia | Updated: May 16, 2019

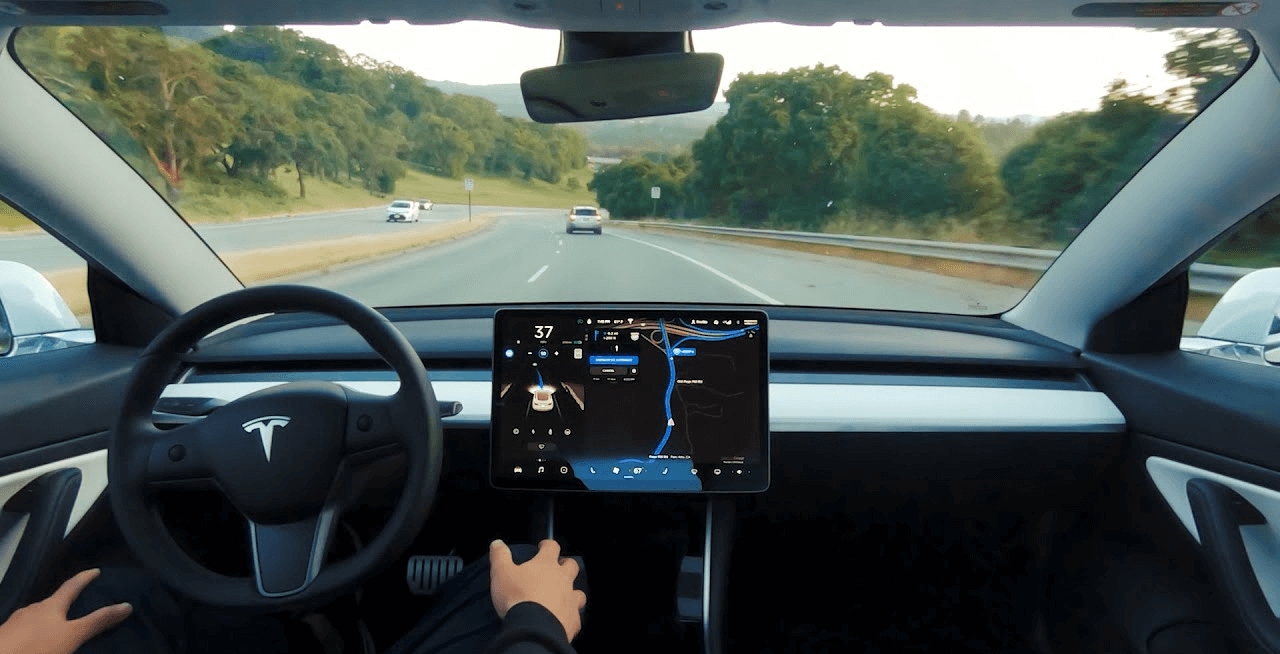

Autonomy is quickly becoming the driving force of the automobile industry. Leading autonomous car makers – GM, Waymo, Tesla, Ford, etc. – are all aggressively improving the infrastructure necessary for autonomy. As there are multiple autonomous vehicle technologies at play, a hot discussion is going on in the market about which one is better – vision based (camera) or sensors based (LiDAR, radar, and ultrasonic).

Currently, pretty much all active players in the market, with the exception of Tesla, are moving forth with LiDAR. Tesla, on the other hand, is betting solely on cameras/vision.

Before we get into thick of the discussion, let’s quickly go through these automotive vehicle technologies (LiDAR, radar, and camera).

LiDAR

LiDAR (Light Detection and Ranging) devices are basically active sensors that emit high-frequency laser signals in quick succession (up to 150k pulses/sec). It measures the time taken for each signal to bounce back and calculate the distance between the vehicle and obstacles with high precision. The main concerns with LiDAR are that it is way too costly and doesn’t recognize colors and traffic signs.

Radar

LiDAR and Radar are essentially the same technologies operating with light waves at different frequencies. Radar emits low-frequency signals; therefore, produce less accurate outcomes (see the image below). While cost is not an issue with radar technology, being a sensor, it also doesn’t recognize colors and signs, which are crucial for autonomous driving.

Ultrasonic is another automotive vehicle technology in wide use today. Unlike LiDAR and radar, these sensors use soundwaves to measure the distance from obstacles.

Camera

Being a vision-based device, camera estimates the distance based on its relative size in the captured frame. The main advantage with camera is that it can recognize traffic signs and traffic light colors, which sensors cannot. While cost is another advantage with camera, but given the amount of efforts the goes into training camera-based systems to achieve sensor level accuracy, it is not a very cost-effective solution either.

Related read: Computer Vision Trends 2019

Now that we have brushed up the basics of main autonomous vehicle technologies, let’s see how they are faring in the real world.

Current Industry State

It is clear that one technology – be it vision or sensors – cannot provide the ultimate solution for driverless cars. As a senior analyst at Navigant puts in “Layering sensors with different capabilities, rather than just relying on a purely vision-based system, is ultimately a safer and more robust solution”.

So, the question here is, which one should be used as the primary autonomous vehicle technology, and which one as the secondary? As mentioned earlier, LiDAR is the industry’s first choice for driverless cars. Main reason being, cameras estimate the distance between objects based on relative size; however, LiDAR sensors know it with high precision.

However, Tesla’s CEO Elon Musk, who has long criticized LiDAR, recently called it “a fool’s errand” at Tesla’s Autonomy Day and claimed that everyone will drop it in the near future. As absurd as it sounds, Musk backed his claim with some reasoning (although it may sound unreasonable to most). First, LiDAR is costly hardware. Secondly, he demonstrated how camera is getting better and better at achieving sensor-level accuracy.

Currently, cost is undeniably the biggest let-down with LiDAR; however, all active players are putting an enormous amount of efforts to bring its cost down. For instance, in 2017, Google-backed Waymo claimed that it will build its own LiDAR sensors and will reduce the cost from $75,000 to $7,500 apiece. In March 2019, Waymo updated on its claim that it will soon start selling its in-house LiDAR devices to third-parties.

Another drawback, LiDAR becomes inaccurate in fog, rain, and snow, as it’s high-frequency signals detects these small particles and include them in the rendering. On the other hand, radar works quite effectively in such weather conditions and it also costs considerably less; therefore, is arguably a better substitute than LiDAR when used as secondary autonomous vehicle technology in conjunction with cameras.

Also Read: The Current State of AI in the Corporate World

What Future Holds for Autonomous Vehicle Technology

Given the ongoing developments, it is likely that the future will unfold either of the following two possibilities:

- Camera-based vision systems achieve LiDAR level accuracy

- LiDAR becomes cheaper than the cost of improving camera systems

Which will happen first is hard to say with any certainty. LiDAR prices have dropped over the last few years, so has the camera’s image processing. Tesla, with its Fleet Learning capabilities, is betting high on cameras and argues that its systems are designed to replicate human behavior and recognize the world around with colors and signs, not by distance. However, discarding LiDAR completely is also not an option at the current stage. So, the next few years will be crucial in deciding where the course of autonomous vehicles turns.