Artificial Intelligence, zBlog

AI Agent Orchestration for Enterprise Workflows: A Practical Guide

trantorindia | Updated: May 8, 2026

There is a moment in every enterprise AI program when the single-agent model stops being enough.

The proof-of-concept worked. An AI agent handling customer ticket triage, or drafting procurement briefs, or summarizing earnings calls — it worked well enough that leadership started asking the next question: can we do this at scale, across workflows, across departments? That is when the fundamental architectural challenge surfaces. A single AI agent, no matter how capable, is like a brilliant freelancer working alone. It can handle its defined scope with skill. But it cannot run an enterprise workflow.

AI agent orchestration is the discipline of designing, deploying, and governing networks of specialized AI agents that coordinate with each other to execute complex, multi-step enterprise workflows — the way an orchestra performs, with every instrument playing its specialized part under coordinated direction.

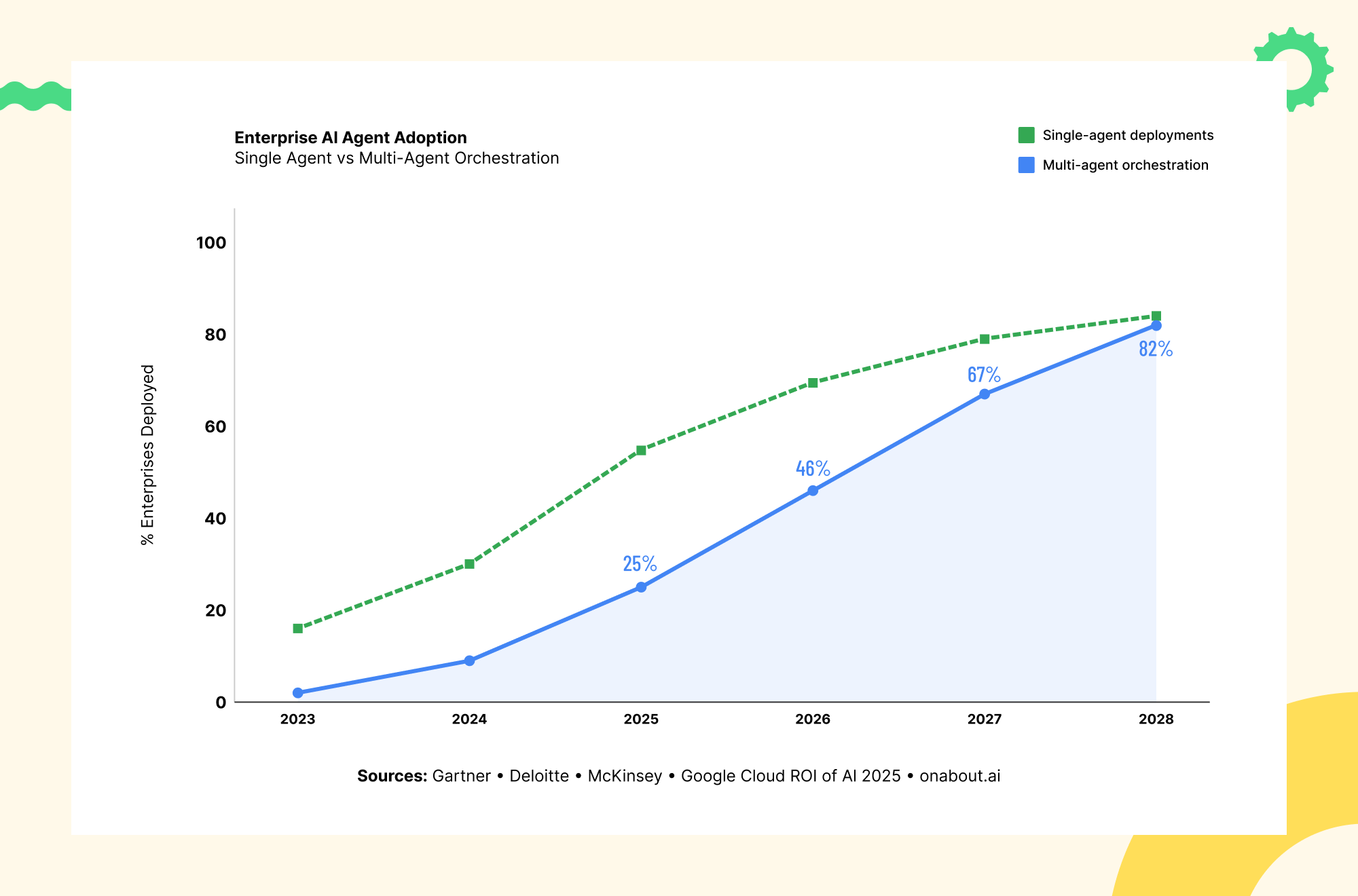

The numbers validate the urgency. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025 — the steepest growth signal in enterprise software since cloud adoption. McKinsey estimates AI agents could add $2.6 to $4.4 trillion in annual value across business use cases — a figure reachable only through orchestrated multi-agent systems, not isolated single-agent deployments.

What Is AI Agent Orchestration? Definition and Core Concepts

AI agent orchestration is the coordination layer that enables multiple specialized AI agents to work together as a unified system — sharing context, dividing labor, passing work between each other, and collectively executing workflows that exceed the capability of any single agent.

The distinction from what came before matters for architecture decisions. RPA and traditional SOAR followed static playbooks — when the process deviated from the script, automation stopped. AI agent orchestration operates on goals, not scripts. An orchestrated multi-agent system can reason about what needs to happen, divide work among specialized agents, adapt when circumstances change, and synthesize results without human intervention at every step.

The Three Layers of an Orchestrated System

Intelligence layer: Where the AI models live — the LLMs powering reasoning, language understanding, and decision-making within individual agents.

Orchestration layer: The coordination infrastructure — deciding which agents are invoked, in what sequence or parallel, how context is passed, where human approval is required, and how errors are handled. This is the most underestimated and most failure-prone layer.

Integration layer: The connectivity infrastructure — APIs, MCP servers, tool connectors, and data pipelines that allow agents to access and act on enterprise systems: CRM, ERP, SIEM, ITSM, databases, and third-party services.

Why Single Agents Are Not Enough: The Case for Multi-Agent Orchestration

Understanding why orchestration matters requires understanding specifically where single-agent deployments hit their ceiling.

Domain overload. A single agent instructed to handle an end-to-end loan origination — documents, credit analysis, compliance, fraud detection, decision summary, customer communication — is being asked to be an expert in too many specialized domains simultaneously. Specialization at the agent level consistently translates to accuracy and reliability at the workflow level.

Sequential processing bottlenecks. Single agents process tasks sequentially. Multi-agent orchestration enables parallel execution — and the economics can be compelling. At $80/hour developer time, a 20-minute time saving per task at 100 tasks per day translates to $2,667 in daily value against orchestration infrastructure costs that are typically far lower.

Accuracy plateaus. Many enterprises find that prompt engineering and model upgrades push single-agent accuracy to 88-90% on complex workflows and then stall. The ceiling is an architecture limitation, not a model limitation. Multi-agent systems with verification agents and feedback loops routinely push accuracy beyond single-agent ceilings.

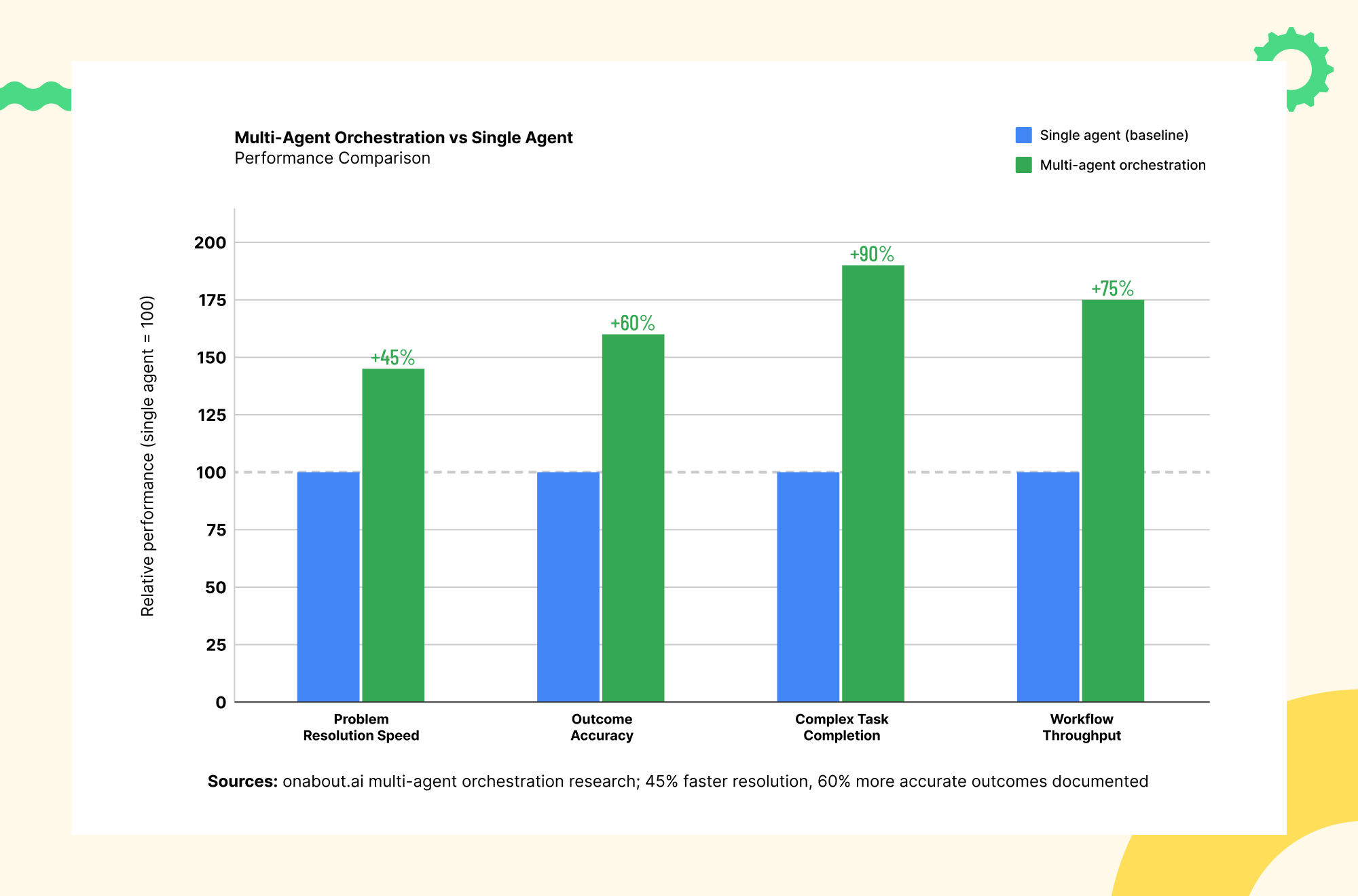

Multi-Agent Orchestration vs Single Agent — Performance Comparison Anthropic’s internal research demonstrated the impact precisely: a lead agent planning strategy while sub-agents gather data in parallel outperformed single-agent benchmarks by 90.2% in internal evaluations. And according to the Salesforce Connectivity Report 2026, 50% of AI agents currently operate in isolated silos rather than as part of a multi-agent system — that isolation is exactly the ceiling that orchestration is built to break through.

Anthropic’s internal research demonstrated the impact precisely: a lead agent planning strategy while sub-agents gather data in parallel outperformed single-agent benchmarks by 90.2% in internal evaluations. And according to the Salesforce Connectivity Report 2026, 50% of AI agents currently operate in isolated silos rather than as part of a multi-agent system — that isolation is exactly the ceiling that orchestration is built to break through.

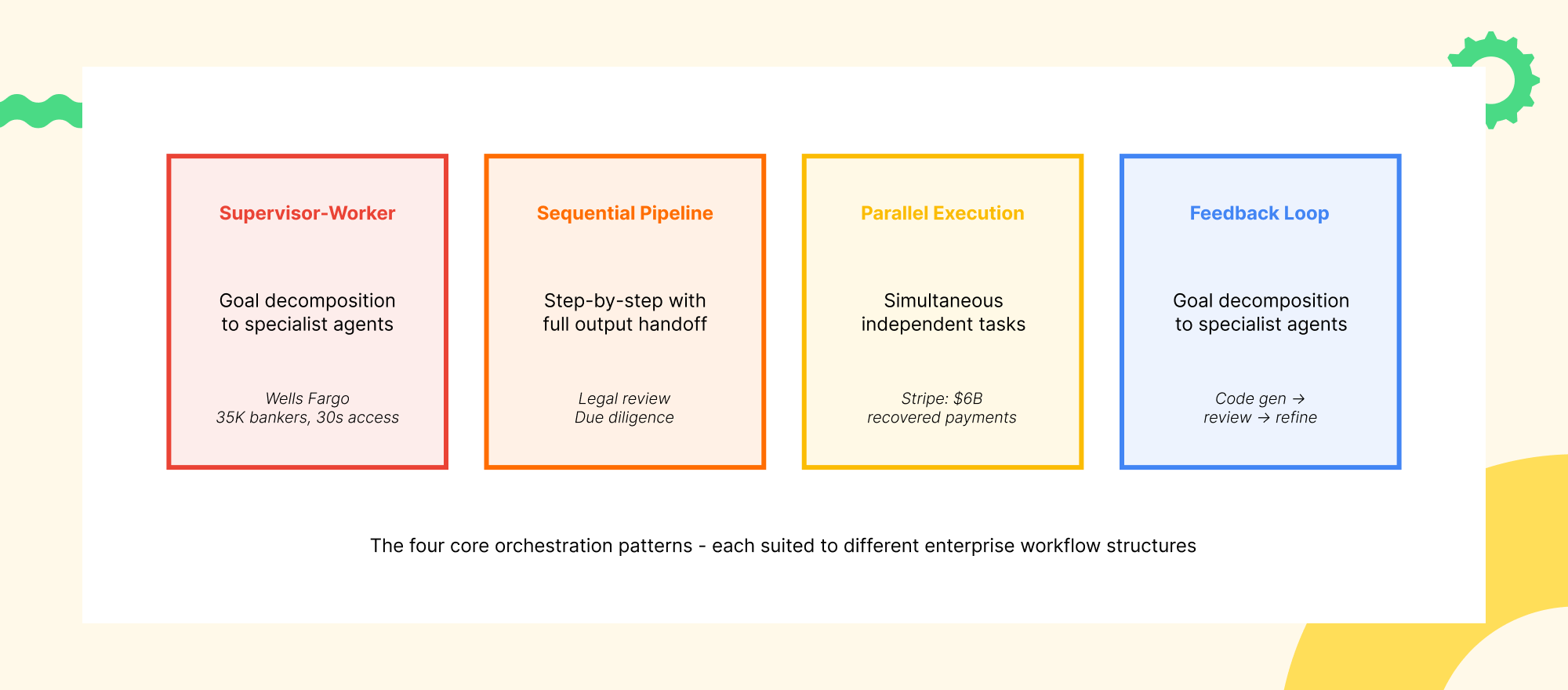

The Four Core Architecture Patterns for Enterprise AI Orchestration

The Four Orchestration Architecture Patterns

Pattern 1: The Supervisor-Worker Pattern (Hierarchical Orchestration)

A central orchestrator agent receives a high-level goal, decomposes it into subtasks, routes those subtasks to specialized worker agents, monitors execution, and synthesizes outputs into a coherent result. This is the most widely applicable pattern for enterprise workflows.

Wells Fargo’s deployment gave 35,000 bankers access to 1,700 internal procedures in 30 seconds rather than 10 minutes. The supervisor agent handles natural language queries, routes information retrieval to specialized knowledge agents, and synthesizes results into actionable guidance. The governance implication: the supervisor becomes the natural control point for HITL oversight across the entire workflow.

Pattern 2: The Sequential Pipeline Pattern

Executes agents in a defined order where each output becomes the next input. Content creation (research → synthesis → writing → compliance review → formatting), due diligence workflows, regulatory filing preparation, and report generation are natural fits. LangGraph’s stateful graph with checkpoint and recovery is particularly well-suited — a failure at step 7 of a 12-step pipeline recovers from step 7, not step 1.

Pattern 3: The Parallel Execution Pattern

Invokes multiple agents simultaneously for independent tasks, then aggregates results. Stripe’s multi-agent payment system — three agents handling payment optimization, fraud detection, and recovery simultaneously — recovered $6 billion in payments in 2024 with a 60% year-over-year improvement in retry success rates. The conclusion: AI-enhanced routing between specialized parallel agents consistently beats any single super-agent.

Pattern 4: The Feedback Loop Pattern (Self-Correction)

Incorporates verification and critique into the orchestration chain: one agent’s output is reviewed by another agent before the workflow proceeds. A coder agent produces an initial solution; a reviewer agent assesses it for security vulnerabilities and edge cases; the coder refines based on feedback — iterating until the reviewer approves. This is what enables multi-agent systems to achieve accuracy levels that exceed what any individual agent can reliably produce.

MCP and A2A: The Protocols That Make Orchestration Work at Scale

Model Context Protocol (MCP): The Tool Integration Standard The Model Context Protocol — developed by Anthropic and now widely adopted across the industry — is the standardized interface through which AI agents connect to external tools, data sources, and systems. Before MCP, every agent framework required custom integration code for every external tool. MCP is the USB-C of agent tool integration: one standard interface, any tool.

As of 2026, MCP has crossed 200 server implementations covering databases, cloud platforms, communication tools, enterprise software, and specialized data sources. All five major agent frameworks have added MCP support. The practical enterprise implication: MCP dramatically reduces the integration cost of AI agent orchestration. Build or adopt an MCP server once, and make it available to all agents in the system.

Agent-to-Agent Protocol (A2A): The Coordination Standard The Agent-to-Agent (A2A) protocol — now under the Linux Foundation with backing from 50+ companies including Microsoft, Google, and Salesforce — defines how AI agents from different frameworks discover each other, delegate tasks, and exchange results. Where MCP handles vertical integration (agent-to-tool), A2A handles horizontal integration (agent-to-agent).

A2A enables an orchestrated system to include a LangGraph agent, a CrewAI agent, and a Google ADK agent — all coordinating through a standard task interface regardless of which framework built them. Organizations that build on MCP and A2A now are building systems that will not require fundamental re-architecture as the framework landscape continues to evolve.

The Framework Decision: Choosing the Right Engine for Your Orchestration

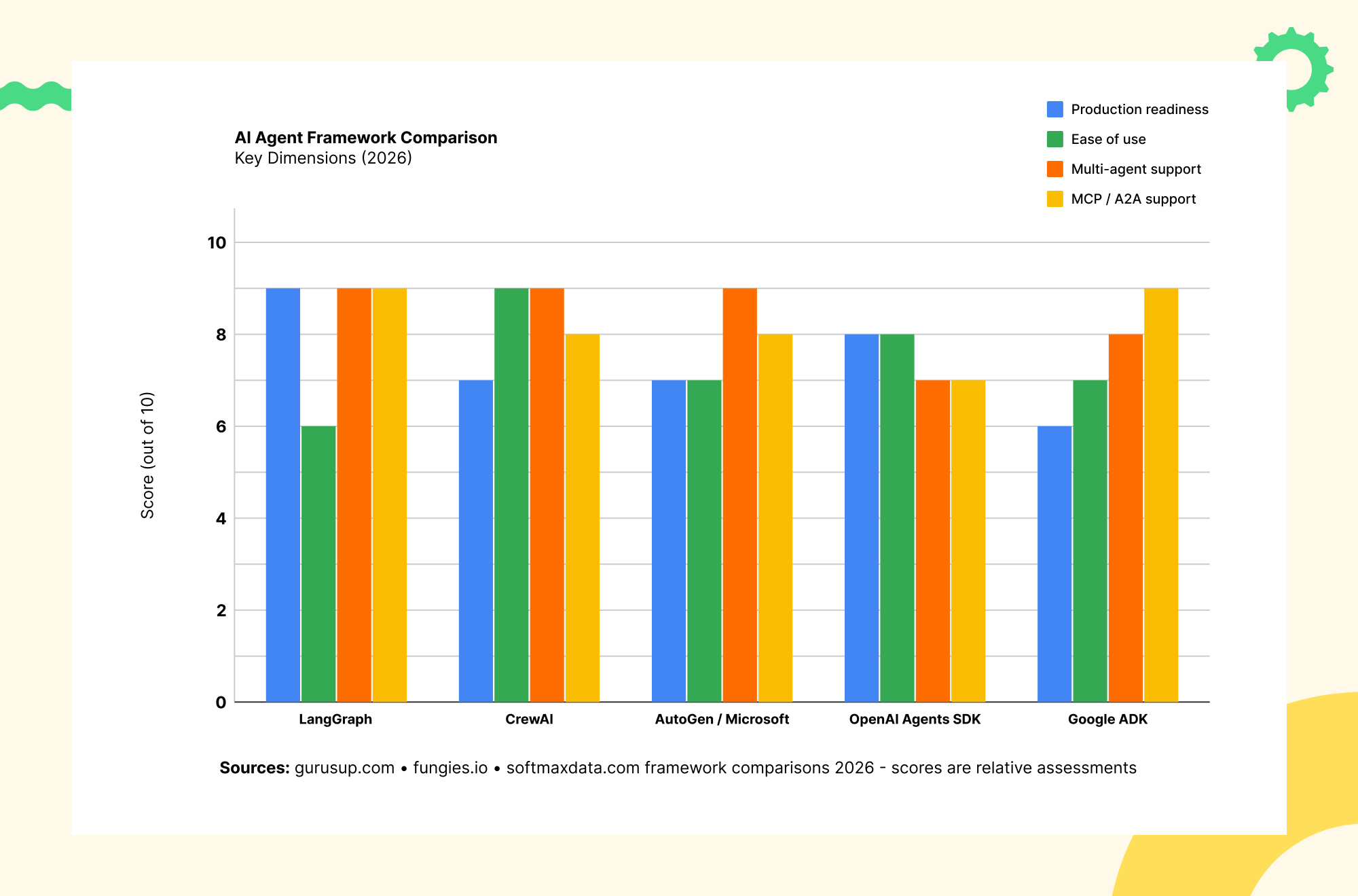

The AI agent framework landscape consolidated significantly in 2026. Five frameworks now account for the substantial majority of production deployments. The right choice depends on your use case, team profile, and existing technology stack.

AI Agent Framework Comparison — Key Dimensions (2026)

The practical guidance: start with CrewAI for prototyping and migrate critical workflow components to LangGraph as production requirements harden. CrewAI’s LangChain compatibility makes this a gradual transition rather than a disruptive rewrite.

Real-World Enterprise Use Cases: Where Orchestration Delivers Measurable ROI

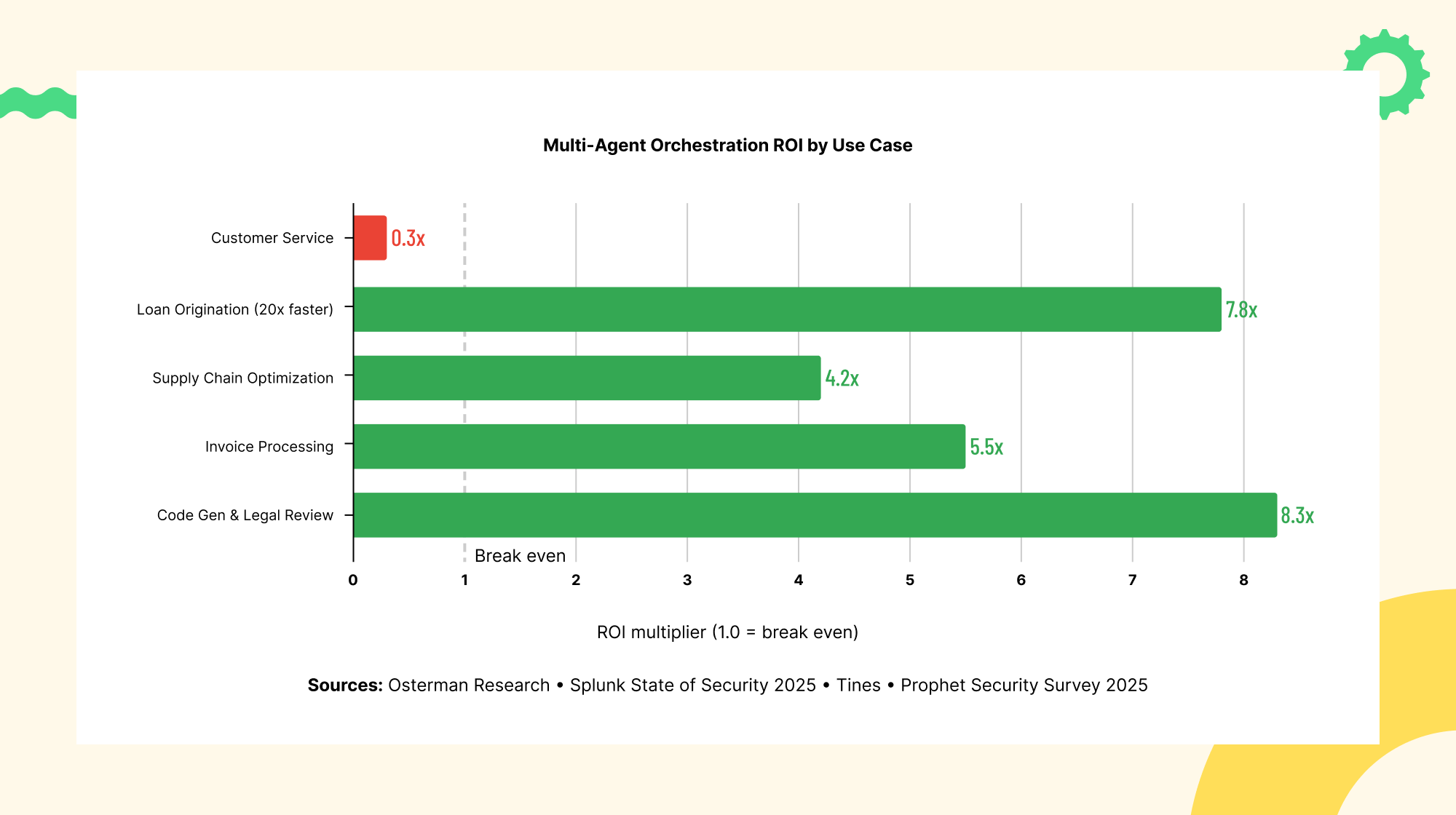

Multi-Agent Orchestration ROI by Use Case

Financial Services: Loan Origination and Payment Processing Financial services is the sector where multi-agent orchestration has generated the most documented ROI. The complexity of regulated financial workflows — simultaneous document verification, risk assessment, compliance checking, fraud detection, and customer communication — maps directly to orchestration’s strengths. Implementations are showing 20x faster application processing, from days to hours for complex loan approvals.

Stripe’s multi-agent system — three specialized agents handling payment optimization, fraud detection, and recovery simultaneously — recovered $6 billion in payments in 2024 with a 60% year-over-year improvement in retry success rates. The key insight: AI-enhanced routing between specialized parallel agents consistently beats any single super-agent on both accuracy and speed.

Supply Chain and Operations: Real-Time Multi-Variable Coordination IBM research found that 62% of supply chain leaders recognize that AI agents embedded in operational workflows accelerate speed to action. Organizations with higher AI investment in supply chain report revenue growth 61% greater than peers. AI-powered supply chain innovations project 15% logistics cost reduction, 35% inventory optimization, and 65% service level improvement (Microsoft research).

A consumer goods enterprise using multi-agent orchestration across procurement, logistics, and customer service agents simultaneously achieves real-time end-to-end optimization — dynamically balancing supplier performance, demand forecasts, inventory levels, and shipping costs. Logistics teams deploying coordinated AI agents have cut delays by up to 40%.

Customer Service: From Ticket Routing to End-to-End Resolution HCLTech’s orchestrated deployment achieved 40% faster case resolution and 30% workforce redeployment to higher-value activities. The architecture: a routing agent classifies and prioritizes requests; specialized knowledge agents retrieve from product documentation and order systems; a resolution agent drafts the response or executes the action; a quality agent reviews before delivery. The entire pipeline executes in seconds; human agents focus on complex escalations.

Software Development: Coordinated Code Generation and Review Amazon’s deployment of Amazon Q Developer to coordinate agents modernizing thousands of legacy Java applications completed upgrades in a fraction of expected time. Databricks reports a 327% surge in multi-agent adoption in software development from 2025 to 2026. Teams reclaim 40+ hours monthly on routine coding tasks through orchestrated development agents — at enterprise developer costs, this translates to hundreds of thousands in annual productivity value per 10-person team.

The Governance Framework: Making Orchestration Safe at Enterprise Scale

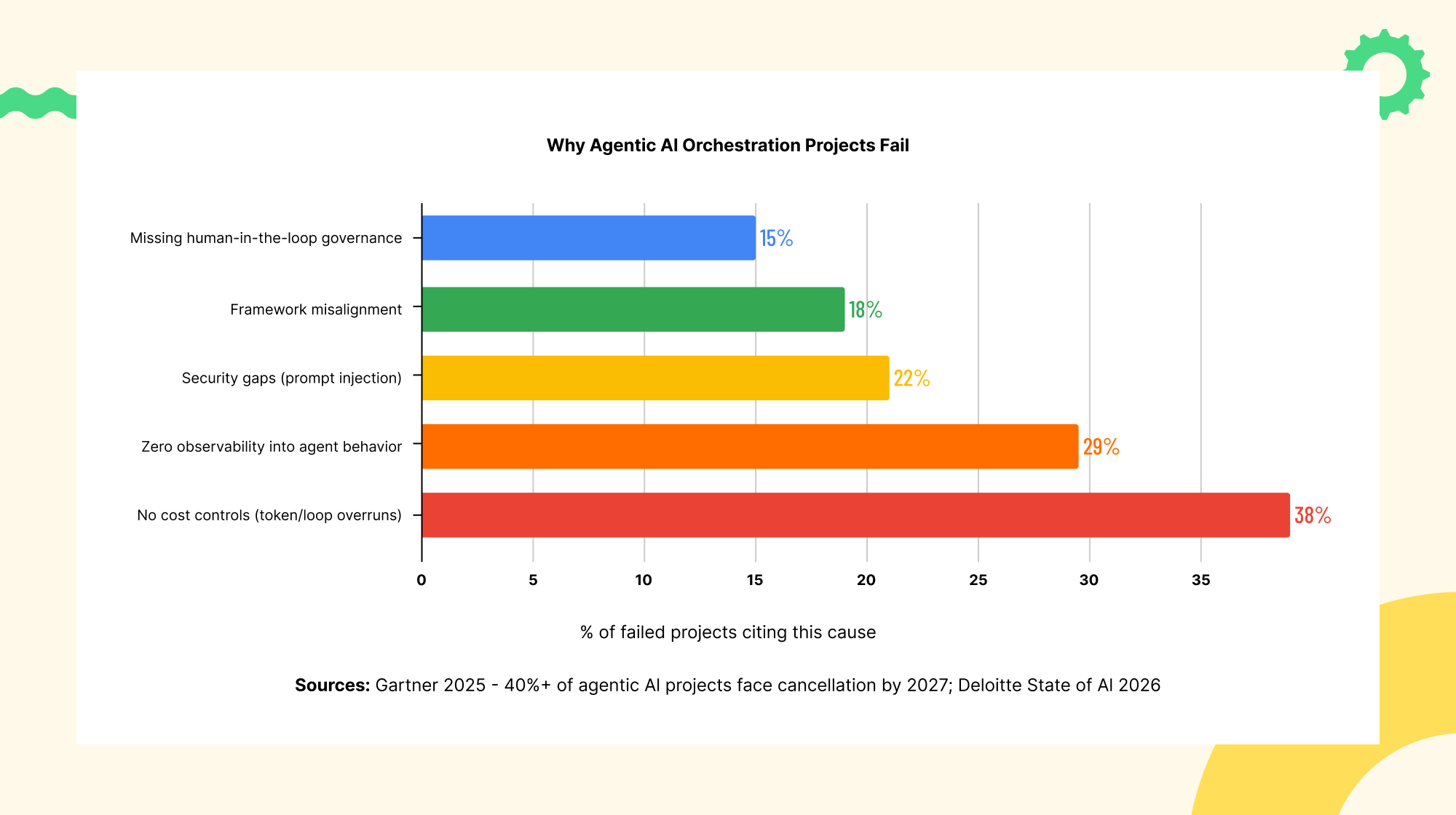

Why Agentic AI Orchestration Projects Fail The data on failed agentic AI projects is sobering. Gartner warns that more than 40% of agentic AI projects face cancellation by 2027 due to runaway costs, unclear value, or missing risk controls. Deloitte’s State of AI 2026 found only 21% of companies have a mature governance model for agents. The three leading causes — cost overruns from uncontrolled agent loops, zero observability, and security gaps including prompt injection — are all governance failures, not technology failures.

The data on failed agentic AI projects is sobering. Gartner warns that more than 40% of agentic AI projects face cancellation by 2027 due to runaway costs, unclear value, or missing risk controls. Deloitte’s State of AI 2026 found only 21% of companies have a mature governance model for agents. The three leading causes — cost overruns from uncontrolled agent loops, zero observability, and security gaps including prompt injection — are all governance failures, not technology failures.

- Define Agent Authority Boundaries at the Infrastructure Level — Every agent needs explicitly defined authority boundaries enforced at the infrastructure level — not just in the prompt. Prompt-level controls can be bypassed through adversarial inputs. CrewAI’s NVIDIA NemoClaw integration demonstrated the standard: “Every action is enforced at the infrastructure level, not within the agent’s own code. This means that even if an agent’s internal logic changes or behaves unexpectedly, the runtime will still block any action that violates defined security policies.”

- Build Observability Before Deployment — Every production orchestration system needs comprehensive trace logging of every agent invocation and tool call; cost tracking at the agent, workflow, and portfolio level; accuracy measurement against defined benchmarks; latency monitoring across the orchestration chain; and anomaly detection for behavior outside expected parameters. LangSmith for LangGraph-based systems and OpenTelemetry for AutoGen are the leading implementations.

- Manage Costs Through Token Budgets and Recursion Limits — One documented case: a multi-agent customer service deployment cost $47,000/month for a system that could have run as a single agent for $22,700, with only a 2.1 percentage point accuracy gain. The lesson: orchestration requires rigorous economic analysis. Code generation, legal review, and invoice processing justified multi-agent orchestration at 8.3x ROI. Customer support at 0.3x ROI did not.

The Adoption Trajectory and Implementation Roadmap

Enterprise AI Agent Adoption — Single Agent vs Multi-Agent Orchestration

Phase 1 — Single-Agent Foundation (Weeks 1-6): Before orchestrating multiple agents, get one agent working reliably in production. Establish integration patterns, observability infrastructure, and operational familiarity with agent behavior in your specific environment. Define the task boundary precisely, establish baseline performance metrics, build the observability stack, and document failure modes.

Phase 2 — Two-Agent Orchestration (Weeks 7-12): Introduce the first agent handoff with a simple two-agent system: a primary agent executing the main task and a verification agent reviewing the output. This immediately produces measurable quality improvements over the single-agent baseline while introducing the orchestration layer without full coordination complexity.

Phase 3 — Domain-Specific Multi-Agent System (Months 3-5): Expand to three-to-five agent orchestration covering a complete domain workflow. Select the right architecture pattern for your workflow structure. Implement MCP for tool integration. Establish agent authority boundaries at the infrastructure level. Deploy comprehensive observability. Validate production performance against the business case.

Phase 4 — Cross-Domain Orchestration and Scale (Month 6+): Connect orchestration systems across domains through A2A protocol. Customer-facing service orchestration connects to back-office fulfillment orchestration connects to supply chain orchestration. This is the level at which dedicated agent operations capabilities — monitoring, tuning, cost optimization, and authority governance — become essential.

Frequently Asked Questions

Q: What is AI agent orchestration and why does it matter for enterprises?

AI agent orchestration is the coordination layer enabling multiple specialized AI agents to work together on complex multi-step workflows. It matters because single agents hit capability, accuracy, and context ceilings on complex enterprise tasks. Organizations using multi-agent architectures achieve 45% faster problem resolution and 60% more accurate outcomes compared to single-agent systems.

Q: What is the difference between MCP and A2A protocols?

MCP (Model Context Protocol) handles vertical integration — the standard interface through which agents connect to external tools, data sources, and enterprise systems. A2A (Agent-to-Agent protocol) handles horizontal integration — how agents from different frameworks discover each other and delegate tasks. Use MCP for tool access. Use A2A for agent-to-agent coordination.

Q: Which AI agent framework should an enterprise choose — LangGraph or CrewAI?

LangGraph is the right choice for complex, stateful workflows requiring crash recovery, full audit trails, and explicit state control — the standard for production-critical enterprise deployments. CrewAI is the right choice for rapid prototyping and role-based multi-agent collaboration. Many enterprises prototype with CrewAI and migrate critical components to LangGraph as production requirements harden.

Q: How do you measure ROI on AI agent orchestration?

Track three categories: efficiency gains (process time reduction, cost per transaction), quality improvements (accuracy rates, error rates), and workforce impact (hours reclaimed, redeployment to higher-value work). Organizations implementing orchestration report 30-50% process time reductions. Set specific baseline metrics before deployment and measure consistently.

Q: Why do 40% of agentic AI projects fail?

Gartner identifies three primary causes: runaway costs from uncontrolled agent loops, zero observability into agent behavior, and security gaps including prompt injection. The fixes: implement token budgets and recursion limits before deployment; build observability as part of the initial system; enforce security policies at the infrastructure level rather than through prompts alone.

Q: What is the right starting point for enterprise AI orchestration?

Start with a single, well-defined, high-value workflow. Get one agent working reliably in production with full observability. Then introduce the first agent handoff — a two-agent system with a primary agent and a verification agent. Organizations that try to deploy full multi-agent orchestration from day one consistently report longer time-to-production than those who build incrementally.

Conclusion: Orchestration Is the Multiplier

The question facing every enterprise AI program has evolved. The first question was whether to use AI agents. The next was which agents to deploy. The question that defines competitive position now is how effectively those agents are orchestrated.

The market signal is unambiguous. A 1,445% surge in multi-agent system inquiries. A global AI agents market growing from $7.63 billion to $10.91 billion in a single year. Forty percent of enterprise applications integrating task-specific agents by end of 2026. Wells Fargo, Stripe, Amazon, and HCLTech generating measurable nine-figure value from orchestrated multi-agent systems that would be impossible with single agents in isolation.

But the failure rate is equally real. Forty percent of agentic AI projects face cancellation. Only 21% of organizations have mature governance. The gap between organizations that capture orchestration’s value and those that cancel their programs is not a technology gap. It is an architecture gap and a governance gap.

At Trantor, we help enterprise organizations move from isolated agent deployments to production-grade orchestration systems that deliver compound value over time. We bring the architectural depth to design orchestration systems matching your specific workflow complexity, technology stack, and governance requirements — and the operational experience to build the observability, cost governance, and human oversight infrastructure that keeps orchestrated systems performing reliably rather than generating the costs and failures that lead to cancellation.

If your organization is designing its first multi-agent orchestration system, evaluating frameworks for a production deployment, scaling an existing pilot to enterprise workflows, or building the governance infrastructure that makes orchestration trustworthy at scale — that is exactly the work we are built for.

Orchestration is the multiplier. Trantor helps you build it right.